Fine-tuning GPT-J online without spending a lot of money

Amarbot was using GPT-J (fine-tuned on my chat history) in order to talk like me. It's not easy to do this if you follow the instructions in the main repo, plus you need a beefy GPU. I managed to do my training in the cloud for quite cheap using Forefront. I had a few issues (some billing-related, some privacy-related) but it seems to be a small startup, and the founder himself helped me resolve these issues on Discord. As far as I could see, this was the cheapest and easiest way out there to train GPT-J models.

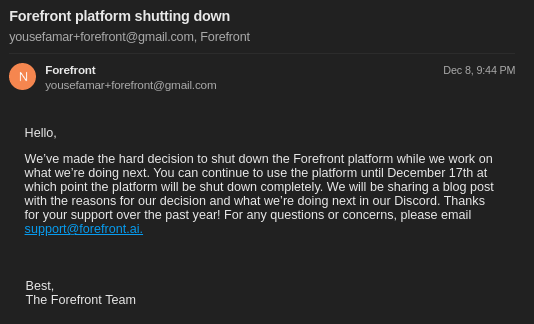

Unfortunately, they're shutting down.

As of today, their APIs are still running, but the founder says they're winding down as soon as they send all customers their requested checkpoints (still waiting for mine). This means Amarbot might not have AI responses for a while soon, until I find a different way to run the model.

As for fine-tuning, there no longer seems to be an easy way to do this (unless Forefront open sources their code, which they might, but even then someone has to host it). maybe#6742 on Discord has made a colab notebook that fine-tunes GPT-J in 8-bit and kindly sent it to me.

I've always thought that serverless GPUs would be the holy grail of the whole microservices paradigm, and it might be close, but hopefully that would make fine-tuning easy and accessible again.